Announcing vetiver 0.2.0

I’m delighted to announce that vetiver 0.2.0 for Python and R are both available (from PyPI and CRAN, respectively). The vetiver framework for MLOps tasks provide fluent tooling to version, deploy, and monitor a trained model in either Python or R. Functions handle both recording and checking the model’s input data prototype, and predicting from a remote API endpoint.

You can install vetiver 0.2.0 for Python with:

python -m pip install vetiverAnd for R with:

install.packages("vetiver")About 9 months ago, we announced the first release of vetiver highlighting our focus on making a data scientist’s first model deployment as straightforward as possible, while building a tool that can grow and scale to more complex use cases. Since then we have been hard at work adding support for new models and better ways to version, deploy, and monitor your models. This post highlights several updates we want you to know about:

To see all the changes in vetiver 0.2.0, including a few minor breaking changes, check out the release notes for Python or for R.

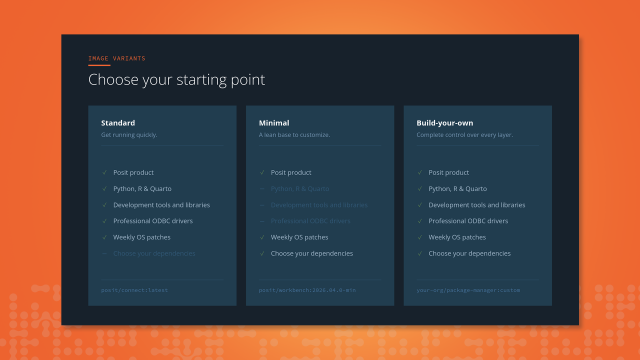

More fluent Docker support

In vetiver 0.2.0, we added a more fluent workflow for generating a Dockerfile and other necessary artifacts:

These functions generate a Dockerfile customized to a particular vetiver model, together with the other needed files (like a FastAPI/Plumber app file and a requirements.txt or renv.lock) to build a Docker image that serves that model. An example Dockerfile for deploying an R model might look like:

# Generated by the vetiver package; edit with care

FROM rocker/r-ver:4.2.1

ENV RENV_CONFIG_REPOS_OVERRIDE https://packagemanager.posit.co/cran/latest

RUN apt-get update -qq && apt-get install -y --no-install-recommends \

libcurl4-openssl-dev \

libicu-dev \

libsodium-dev \

libssl-dev \

make \

zlib1g-dev \

&& apt-get clean

COPY vetiver_renv.lock renv.lock

RUN Rscript -e "install.packages('renv')"

RUN Rscript -e "renv::restore()"

COPY plumber.R /opt/ml/plumber.R

EXPOSE 8000

ENTRYPOINT ["R", "-e", "pr <- plumber::plumb('/opt/ml/plumber.R'); pr$run(host = '0.0.0.0', port = 8000)"]Some data science practitioners are already very familiar with Docker and creating the best possible Dockerfile for model deployment doesn’t sound like a hard task. However, we find that there are many data science practitioners who are happy to have support in this area, especially when it comes to best practices for reliable Docker images that are not bloated. We have created an article that focuses on deploying with Docker, including a section on learning Docker basics. Also check out Isabel’s recent talk at PyData NYC that covers topics such as why your versioned model binary is best stored outside your Docker image.

If you are looking for tooling to directly deploy a model in one line (without having to worry about Docker!), you may want to look at Connect together with vetiver_deploy_rsconnect() for R and vetiver.deploy_rsconnect() for Python.

New models

We designed vetiver to be extensible, with generics that can support many kinds of models. Since our last blog post, we have added models such as:

- XGBoost for Python (we already had support for XGBoost for R)

- statsmodels

- stacks ensembles for tidymodels

- GAMS fit with mgcv

We are interested in what other modeling frameworks to support, so please let us know what you would like to use vetiver with! A GitHub issue for either R or Python is a great place to start.

Model documentation and monitoring

MLOps tasks aren’t only about choosing an ML algorithm or building Docker images. The practices to deploy and maintain ML models in production reliably and efficiently also include documenting your model and monitoring your model.

Good documentation helps us make sense of software, know when and how to use it, and understand its purpose; this is true of models as well! The vetiver framework provides support for creating Model Cards based on the paper “Model Cards for Model Reporting” (Mitchell et al. 2019). In Python, the Model Card template is available as a Quarto document you can generate via vetiver.model_card(path = "."); in R, the template is available as an R Markdown template in the package.

Models in production can fail silently, and often the only way you will know is if you are explicitly monitoring the statistical performance of your model using true labels (i.e., feedback from your system) for your new data. In vetiver, we have support for computing and aggregating metrics (including multiple metrics and custom metrics), storing and versioning those metrics, and plotting those metrics. Effective model monitoring is not “one size fits all”, but instead depends on choosing appropriate metrics and time aggregation for a given application. Building better and more complete monitoring features is one of the areas of focus for the vetiver team in 2023, so please let us know what your own model monitoring use cases are like!

Acknowledgements

We’d like to thank all 35 folks who have contributed to vetiver so far, whether via filing issues or contributing code or documentation.

For Python:

@attilaszombati, @dbkegley, @ganesh-k13, @gsingh91, @has2k1, @isabelizimm, @juliasilge, @lorenzwalthert, @M4thM4gician, @machow, @SamEdwardes, and @xuf12

For R:

@aropele, @arronlacey, @atheriel, @be-marc, @bjfletcher, @blairj09, @btbroderick, @cderv, @cregouby, @csgillespie, @datadavidz, @DavisVaughan, @galen-ft, @ggpinto, @gsingh91, @isabelizimm, @JakeRuss, @jonthegeek, @jtsuvile, @juliasilge, @kmasiello, @machow, @mdneuzerling, @mfansler, @michkam89, @miguellacerda, @Olummy, @RMHogervorst, @SamEdwardes, @schloerke, @shaman-narayanasamy, @smingerson, @t-kalinowski, @topepo, and @tyluRp

Julia Silge