2026-02-27 AI Newsletter

External news

In this newsletter, we are often focused on the cloud-hosted models from the frontier AI labs, which are typically better-performing and more popular. But local models do have real advantages, and progress in the local model space, like AI progress more broadly, is advancing rapidly.

Last week saw a notable development on that front: GGML, the team behind llama.cpp, is joining Hugging Face. llama.cpp is essentially the engine that makes local model inference work on consumer hardware, forming the foundation of tools like Ollama and countless other projects. This new partnership gives llama.cpp long-term sustainable resources, ideally allowing it to keep evolving alongside the models it runs. The team also has plans to improve access to local models for casual users.

In the frontier model space, Anthropic released Claude Sonnet 4.6 and Google released Gemini 3.1 Pro. Like the models discussed in our last newsletter, they are both incremental improvements over their predecessors.

Posit news

Simon has been experimenting with an LLM-based code review app focused on interactivity. In this demo R package called reviewer, models can leave suggested changes in a Google Docs-style interface.

Simon was surprised by how poorly frontier models played the role of the reviewer in this environment. Even in situations with more significant reproducibility and rigor issues, models like Claude Opus 4.6 tended to focus on smaller, less important changes like rephrasing comments or removing trailing whitespace from a line.

Terms

Cloud-hosted models are models that you access over the internet via an API. You send your prompt to a company's servers, and they send back a response. This is how people interact with frontier models like Anthropic’s Claude, OpenAI’s GPT, and Google’s Gemini series. Local models, in contrast, run directly on hardware you control, whether that's your laptop, a workstation, or a server inside your organization's network. The key distinction is where the computation happens and who controls the infrastructure.

You might have also heard about open weights models. Open weights models and local models are related, but distinct. If a model has open weights, like OpenAI’s GPT OSS models, it means its weights (the strengths of the connections between two points in its network) are publicly released and its model files are available for anyone to download. Typically, a model needs to have open weights for you to run it locally, since you can’t download a model with closed weights. However, not all open weights models are local models. Some are so large that they require cloud-scale infrastructure to run, making them open but not local (at least for most users).

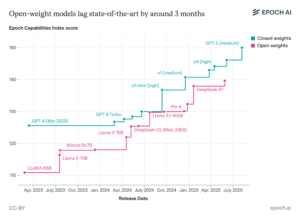

Local models small enough to run on your laptop are far behind frontier models. Epoch AI’s research, however, finds that larger local models, ones that a relatively well-resourced organization might deploy internally, are much more capable, though they still lag behind the closed-weights frontier.

Learn more

- Sharon Machlis wrote an article about using ellmer and vitals to evaluate LLMs in R.

- Simon Willison made some insightful observations about what it means for software engineering that “code is now cheap.” This doesn’t necessarily mean that good code is cheap, but it does mean that some code—code that’s possibly good enough to prove a concept—is cheap.

- Benn Stancil on why successful AI products are often reckless, and what that might mean for data science tools in the future.

Sara Altman