Fifty Releases of Pointblank: a Year of Building Data Quality Tooling

It is difficult to believe that we have reached the fiftieth release of the Python package Pointblank (from initial release v0.1.0 to the just-released v0.19.0). When this project began in late 2024, the goal was straightforward: create a Python library that makes it easy to validate tabular data and communicate results effectively. What has emerged over the past year is a comprehensive toolkit for data quality that spans multiple interfaces, supports dozens of spoken languages for reporting, and integrates with virtually any tabular data source you might encounter in your work. This post will take you through the journey of these fifty releases, highlighting the features and capabilities that have been built along the way.

The development of Pointblank has been guided by several core principles that have remained constant even as the feature set has expanded considerably. I have always held that excellent reporting should accompany comprehensive validation methods. And I have striven to make the library work with data in whatever form it takes, whether that means Polars DataFrames, Pandas DataFrames, database tables via Ibis, or even just paths to CSV and Parquet files. There are now myriad ways to perform validation checks because different contexts call for different approaches. Throughout it all, I have tried to be responsive to what users actually need, building features that data owners have requested and ensuring that the library integrates well into pipeline processes. Each of these themes warrants some exploration, so let us examine them in turn with concrete examples drawn from the documentation.

Comprehensive Validation Methods with Excellent Reporting

The foundation of any data quality library is its validation methods, and Pointblank has accumulated a substantial collection of them over these fifty releases. The validation workflow follows a consistent pattern: you create a Validate object with your data, chain together validation methods that define your data quality rules, and call interrogate() to execute the validation plan. The result is a validation report that provides detailed information about each step.

import pointblank as pb

import polars as pl

# Sample data for demonstration

data = pl.DataFrame({

"id": range(1, 11),

"value": [120, 85, 47, 210, 30, 155, 175, 95, 205, 140],

"category": ["A", "B", "C", "A", "D", "B", "A", "E", "A", "C"],

"ratio": [0.5, 0.7, 0.3, 1.2, 0.8, 0.9, 0.4, 1.5, 0.6, 0.2],

})

validation = (

pb.Validate(

data=data,

tbl_name="sales_data",

label="Example validation demonstrating multiple methods"

)

.col_vals_gt(columns="value", value=50)

.col_vals_in_set(columns="category", set=["A", "B", "C"])

.col_vals_le(columns="ratio", value=1.0)

.rows_distinct()

.col_exists(columns=["id", "value", "category"])

.interrogate()

)

validationThe validation methods span several categories that address different aspects of data quality. Column value validations like col_vals_gt(), col_vals_between(), and col_vals_in_set() check individual values against specified constraints. Row-based validations like rows_distinct() and rows_complete() examine entire rows for duplicates or missing values. Table structure validations like col_exists() and col_schema_match() verify that the data conforms to expected schemas. Aggregate validations like col_sum_gt() and col_sd_lt() check summary statistics. And the recently added data_freshness() method allows you to verify that your data is up to date.

The validation report itself is a Great Tables object, which means it inherits all the formatting and display capabilities that Great Tables provides. Each row in the report corresponds to a validation step, with columns showing the validation type, the columns involved, the parameters used, and the results. The results section shows the total number of test units, how many passed, how many failed, and whether any threshold levels were exceeded. The visual status indicators on the left edge of each row provide an immediate signal about the health of each validation step.

One feature that emerged relatively early in development was the step report, which provides a detailed view of a single validation step. When you encounter failures in your validation report, you often want to know exactly which rows failed and why. The get_step_report() method provides this information in a focused, customizable format.

# Get a detailed step report for the `col_vals_in_set()` validation (step 2)

step_report = validation.get_step_report(i=2)

step_report| Report for Validation Step 2 ASSERTION 2 / 10 TEST UNIT FAILURES IN COLUMN 3 EXTRACT OF ALL 2 ROWS (WITH TEST UNIT FAILURES IN RED): |

||||

Step reports highlight the failing rows and clearly indicate which column was under examination. They can be customized with parameters like columns_subset= to show only relevant columns, limit= to cap the number of rows displayed, and header= to provide custom context. The reports even support templating in the header, allowing you to include dynamic information about the validation step alongside your own explanatory text.

Schema validation step reports have a completely different structure, comparing expected versus actual column data types and presence. This format makes it easy to see exactly where the schema expectations differ from reality.

# Define an expected schema for the small_table dataset

schema = pb.Schema(

columns=[

("date_time", "timestamp"),

("dates", "date"),

("a", "int64"),

("b",),

("c",),

("d", "float64"),

("e", ["bool", "boolean"]),

("f", "str"),

]

)

# Create a validation with a schema check

validation_schema = (

pb.Validate(

data=pb.load_dataset(dataset="small_table", tbl_type="duckdb"),

tbl_name="small_table",

label="Schema validation example"

)

.col_schema_match(schema=schema)

.interrogate()

)

# Display the step report for the schema validation

validation_schema.get_step_report(i=1)| Report for Validation Step 1 ✗ COLUMN SCHEMA MATCH COMPLETE IN ORDER COLUMN ≠ column DTYPE ≠ dtype float ≠ float64 |

|||||||

| TARGET | EXPECTED | ||||||

|---|---|---|---|---|---|---|---|

| COLUMN | DATA TYPE | COLUMN | DATA TYPE | ||||

| 1 | 1 | ✓ | ✗ | ||||

| 2 | 2 | ✗ | — | ||||

| 3 | 3 | ✓ | ✓ | ||||

| 4 | 4 | ✓ | |||||

| 5 | 5 | ✓ | |||||

| 6 | 6 | ✓ | ✓ | ||||

| 7 | 7 | ✓ | ✓ | ||||

| 8 | 8 | ✓ | ✗ | ||||

Supplied Column Schema: [('date_time', 'timestamp'), ('dates', 'date'), ('a', 'int64'), ('b',), ('c',), ('d', 'float64'), ('e', ['bool', 'boolean']), ('f', 'str')] |

|||||||

The schema step report presents a side-by-side comparison of expected and actual column information, making mismatches immediately apparent. This is particularly valuable when working with data from external sources where the schema may drift over time, or, when onboarding new datasets where you need to verify that they conform to established standards.

The combination of comprehensive validation methods and detailed reporting creates a workflow where data quality issues can be identified quickly and communicated clearly. Whether you are checking individual values, examining row level patterns, or validating entire schemas, Pointblank provides the tools to understand your data and share that understanding with others.

Data in Many Forms

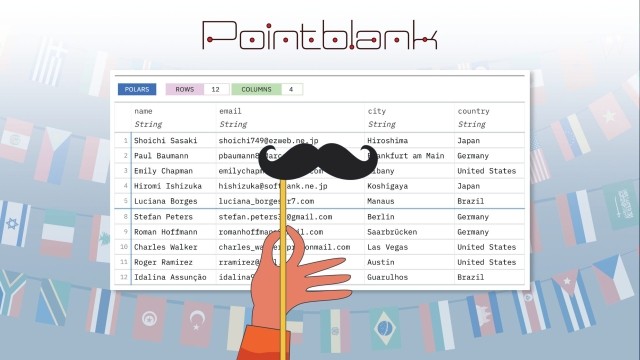

One of the design decisions that has paid dividends over time is the commitment to supporting data in whatever form it arrives. Pointblank does not require you to convert your data to a particular format before validation. If you have a Polars DataFrame, validate it directly. If you have a Pandas DataFrame, that works too! If your data lives in a database that Ibis supports, you can validate it there without materializing the entire table into memory. And if your data is sitting in a CSV or Parquet file, you can simply provide the path.

# Validate data from a file path directly

validation_from_file = (

pb.Validate(

data=pb.load_dataset(dataset="small_table", tbl_type="polars"),

tbl_name="small_table",

label="Validation from loaded dataset"

)

.col_vals_gt(columns="a", value=0)

.col_vals_between(columns="d", left=0, right=10000)

.interrogate()

)

validation_from_file| Pointblank Validation | |||||||||||||

Validation from loaded dataset Polarssmall_table |

|||||||||||||

| STEP | COLUMNS | VALUES | TBL | EVAL | UNITS | PASS | FAIL | W | E | C | EXT | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| #4CA64C | 1 |

col_vals_gt()

|

✓ | 13 | 13 1.00 |

0 0.00 |

— | — | — | — | |||

| #4CA64C | 2 |

col_vals_between()

|

✓ | 13 | 13 1.00 |

0 0.00 |

— | — | — | — | |||

2026-04-13 12:47:02 UTC< 1 s2026-04-13 12:47:03 UTC |

|||||||||||||

The underlying implementation uses Narwhals to provide a unified interface across DataFrame libraries, and Ibis to handle database connections. This architecture means that your validation code remains largely the same regardless of where your data lives. You write your validation plan once, and it works with Polars, Pandas, DuckDB, PostgreSQL, MySQL, SQLite, Spark, BigQuery, and many other backends that Ibis supports. The recent addition of native Spark DataFrame support through Narwhals (without requiring Ibis) further expanded the range of data sources that can be validated efficiently.

The preview() function exemplifies this data-agnostic philosophy. It provides a quick look at any supported table type with consistent formatting, showing row numbers and optionally an information header with details about the table.

# Preview a dataset with informative header

pb.preview(

pb.load_dataset(dataset="game_revenue", tbl_type="polars"),

n_head=5,

n_tail=3,

incl_header=True

)PolarsRows2,000Columns11 |

|||||||||||

The ability to work with data in many forms has implications beyond the core validation workflow. The preview() function, for instance, has found use in exploratory data analysis sessions where analysts want a quick look at unfamiliar datasets without writing boilerplate code. Two other functions help you get better acquainted with a new dataset: col_summary_tbl() provides a comprehensive statistical summary of each column, while missing_vals_tbl() gives you an immediate view of missing data patterns across all columns.

# Get a statistical summary of each column

pb.col_summary_tbl(pb.load_dataset(dataset="game_revenue", tbl_type="polars"))PolarsRows2,000Columns11 |

|||||||||||||

| Column | NA | UQ | Mean | SD | Min | P5 | Q1 | Med | Q3 | P95 | Max | IQR | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

String |

0 0 |

76 0.04 |

15 | 0 | 15 | 15 | 15 | 15 | 15 | 15 | 15 | 0 | |

String |

0 0 |

618 0.31 |

24 | 0 | 24 | 24 | 24 | 24 | 24 | 24 | 24 | 0 | |

Datetime(time_unit='us', time_zone='UTC') |

0 0 |

618 0.31 |

- | - | 2015 01 01 01:31:03+00:00 |

- | - | - | - | - | 2015 01 21 03:59:23+00:00 |

- | |

Datetime(time_unit='us', time_zone='UTC') |

0 0 |

1989 0.99 |

- | - | 2015 01 01 01:31:27+00:00 |

- | - | - | - | - | 2015 01 21 04:06:29+00:00 |

- | |

String |

0 0 |

2 <.01 |

2.22 | 0.42 | 2 | 2 | 2 | 2 | 2 | 3 | 3 | 0 | |

String |

0 0 |

24 0.01 |

7.35 | 1.27 | 5 | 5 | 7 | 8 | 8 | 9 | 11 | 1 | |

Float64 |

0 0 |

508 0.25 |

4.34 | 13.21 | 0.004 | 0.01 | 0.09 | 0.38 | 1.25 | 21.99 | 142.99 | 1.16 | |

Float64 |

0 0 |

310 0.15 |

25.63 | 9.55 | 3.2 | 4.2 | 18.5 | 26.5 | 33.82 | 39.5 | 41.0 | 15.32 | |

Date |

0 0 |

20 0.01 |

- | - | 2015 01 01 |

- | - | - | - | - | 2015 01 21 |

- | |

String |

0 0 |

6 <.01 |

7.97 | 2.54 | 5 | 5 | 7 | 7 | 8 | 14 | 14 | 1 | |

String |

0 0 |

23 0.01 |

8.53 | 3.13 | 5 | 5 | 6 | 7 | 12 | 13 | 14 | 6 | |

| String columns statistics regard the string's length. | |||||||||||||

The column summary table provides a wealth of information at a glance: data types, counts of unique values, missing value percentages, and key statistics. This kind of overview is really valuable when you first encounter a dataset and need to understand its structure before diving into validation rules.

Complementing the column summary, the missing_vals_tbl() function focuses specifically on missing data patterns, which is often one of the first things you want to understand about a new dataset.

# View missing data patterns across all columns

pb.missing_vals_tbl(pb.load_dataset(dataset="game_revenue", tbl_type="polars"))| Missing Values ✓ | ||||||||||

PolarsRows2,000Columns11 |

||||||||||

| Column | Row Sector | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | |

NO MISSING VALUES PROPORTION MISSING: 0% 100% ROW SECTORS

|

||||||||||

These utilities emerged from the same architectural foundation that supports validation, demonstrating how a commitment to data source flexibility creates opportunities for useful tooling beyond the original scope of the project. When you encounter a new dataset, these functions provide the quick orientation that helps you understand the data before you begin writing validation rules.

Flexible Approaches to Validation

Different contexts call for different approaches to data validation. A data scientist exploring a new dataset in a Jupyter notebook has different needs than a data engineer building an automated pipeline. Pointblank addresses this by providing multiple interfaces for performing validation: the Python API, YAML configuration files, and a command line utility.

The Python API offers the most flexibility and is ideal for complex validation logic. You have access to the full power of Python for preprocessing, custom expressions, and integration with other libraries. The interface makes validation plans readable and maintainable.

One of the most powerful features of the Python API is the pre= parameter, which enables preprocessing of data before validation without modifying the original dataset. This creates enormous flexibility in how you construct validation steps. You can create a computed column and validate that column in a single validation step, and the mutation of the table is completely isolated to that step. The original data remains untouched, and subsequent validation steps see the original table unless they define their own preprocessing.

# Python API with preprocessing: create and validate a computed column

validation_with_pre = (

pb.Validate(

data=pb.load_dataset(dataset="small_table", tbl_type="polars"),

label="Validation with preprocessing"

)

.col_vals_gt(

columns="a",

value=5,

pre=lambda df: df.with_columns(pl.col("a") * 2) # Double values before checking

)

.col_vals_gt(

columns="computed_ratio",

value=0,

pre=lambda df: df.with_columns((pl.col("d") / pl.col("c")).alias("computed_ratio"))

)

.interrogate()

)

validation_with_preNotice the notes that appear in the validation report for steps that use pre=. These notes indicate that preprocessing was applied, helping readers understand that the validation was performed on transformed data rather than the original table. The notes system appears throughout Pointblank to provide contextual information when steps encounter conditions worth flagging, such as column selectors that do not resolve to any columns, schema comparison results, or other situations where additional explanation helps users understand what happened during validation.

This preprocessing approach is particularly valuable when you need to validate derived metrics, normalized values, or relationships between columns that require computation. Rather than preprocessing your entire dataset before validation, you can express the transformation and the validation together, making your validation plan self-documenting and easier to maintain.

YAML configuration files provide a declarative, readable approach that works well for teams. They can be version controlled alongside your data processing code, enable non-programmers to contribute to data quality definitions, and provide clear separation between validation logic and execution code. The yaml_interrogate() function executes these configurations. Here is an example YAML validation file:

demo_validation.yaml

tbl: small_table

df_library: polars

tbl_name: "YAML Validation Example"

label: "Validation defined in YAML"

steps:

- rows_distinct

- col_exists:

columns: [a, b, c, d]

- col_vals_not_null:

columns: [a, b]

- col_vals_gt:

columns: a

value: 0Running this validation is straightforward with yaml_interrogate():

# Execute the YAML-defined validation

yaml_result = pb.yaml_interrogate("demo_validation.yaml")

yaml_resultThe command line interface, accessed through the pb command, enables quick data quality checks directly in the terminal. This is particularly useful for CI/CD pipelines, shell scripts, and automation workflows where you need immediate feedback without starting a Python session. The pb validate command runs common validation checks with simple arguments, while pb run executes Python validation scripts from the command line.

The above demonstration shows some of the more essential CLI workflows: using pb info to get table dimensions, running pb validate with checks like rows-distinct and col-vals-not-null, and using the --show-extract option to see exactly which rows are problematic when validation fails. The CLI provides clear, color-coded output that makes it easy to interpret results at a glance. Failing rows can also be saved to CSV files for further investigation, and the --exit-code option makes it straightforward to integrate validation into pipelines that need to halt on data quality failures.

Communication is Key

Data quality is fundamentally a communication challenge. It is not enough to detect problems; you must also communicate those problems to the people who can fix them, in a form they can understand. Pointblank approaches this challenge from several angles.

The validation report itself is designed for communication. It provides a complete picture of data quality in a single view, with visual indicators that draw attention to problems. The report can be customized with labels and governance metadata including owner, consumers, and version information, which helps document data ownership and dependencies.

# Validation with governance metadata and automatic briefs

governance_validation = (

pb.Validate(

data=data,

tbl_name="sales_data",

label="Q4 Sales Data Quality Report",

owner="data-platform-team",

consumers=["ml-team", "analytics", "finance"],

version="2.1.0",

brief=True,

)

.col_vals_gt(columns="value", value=0)

.col_vals_in_set(columns="category", set=["A", "B", "C", "D", "E"])

.interrogate()

)

governance_validation| Pointblank Validation | |||||||||||||

Q4 Sales Data Quality Report Polarssales_data |

|||||||||||||

| STEP | COLUMNS | VALUES | TBL | EVAL | UNITS | PASS | FAIL | W | E | C | EXT | ||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| #4CA64C | 1 |

col_vals_gt()

Expect that values in |

✓ | 10 | 10 1.00 |

0 0.00 |

— | — | — | — | |||

| #4CA64C | 2 |

col_vals_in_set()

Expect that values in |

✓ | 10 | 10 1.00 |

0 0.00 |

— | — | — | — | |||

2026-04-13 12:47:03 UTC< 1 s2026-04-13 12:47:03 UTC |

|||||||||||||

Owner: data-platform-teamConsumers: ml-team, analytics, financeVersion: 2.1.0 |

|||||||||||||

Notice the automatic brief text that appears in each row of the report, describing in plain language what the validation step is checking. Setting brief=True in the Validate() call enables these autobriefs for all steps, making the report immediately understandable to stakeholders who may not be familiar with validation method names like col_vals_gt() or col_vals_in_set(). You can also provide custom brief text on individual steps if the automatic description does not fit your needs.

Language localization is another dimension of communication that has received significant attention. Validation reports can be generated in dozens of languages, making data quality accessible to international teams. All official EU languages are supported, along with many others, including Arabic, Hindi, Greek, Traditional Chinese, Japanese, Korean, Thai, Hebrew, Indonesian, Ukrainian, Farsi, and more. The lang= parameter in Validate controls the report language.

# Validation report translated to French

french_validation = (

pb.Validate(

data=data,

tbl_name="sales_data",

label="Rapport de qualité des données",

lang="fr",

brief=True,

)

.col_vals_gt(columns="value", value=50)

.col_vals_in_set(columns="category", set=["A", "B", "C"])

.col_vals_le(columns="ratio", value=1.0)

.interrogate()

)

french_validationThe actions system enables proactive communication when validation problems occur. You can define actions that trigger when specific threshold levels are exceeded, such as printing messages to the console or sending Slack notifications with the send_slack_notification() function. This transforms validation from a passive reporting exercise into an active monitoring system.

# Validation with threshold-based actions

action_validation = (

pb.Validate(

data=data,

label="Validation with actions",

thresholds=pb.Thresholds(warning=1, error=3)

)

.col_vals_gt(

columns="value",

value=100,

actions=pb.Actions(

warning="Warning: Some values below 100 threshold",

error="Error: Many values below 100 threshold"

)

)

.interrogate()

)

action_validationError: Many values below 100 thresholdThe actions system is quite flexible beyond simple string messages. You can provide callable functions that execute when thresholds are exceeded, enabling custom logging, alerting, or remediation workflows. There is also a templating system that lets you include dynamic information in action messages, such as the step number, the column being validated, or the count of failing test units. This makes it possible to generate informative, context-aware notifications that help recipients understand exactly what went wrong without having to dig through the full validation report.

Integration with notebooks and Quarto is seamless because validation reports are Great Tables objects. They render beautifully in Jupyter notebooks, JupyterLab, and Quarto documents without any additional configuration. This makes it easy to include data quality reporting in analytical notebooks and published reports.

Responsive to User Needs

The development of Pointblank has been shaped substantially by user feedback and feature requests. The contributors who have joined the project over these fifty releases have brought valuable perspectives and concrete improvements. The changelog for each release tells the story of this collaborative development.

The DraftValidation class emerged from users who wanted help getting started with validation on new datasets. It uses large language models to analyze your data and generate a validation plan tailored to its structure and content. You provide your data and specify an LLM provider (Anthropic, OpenAI, Ollama, or Amazon Bedrock), and receive executable Python code that you can use directly or modify as needed.

The segmentation feature arose from users who needed to validate data across different groups without writing separate validation plans for each segment. With the segments= parameter, you can split a validation step by the values in a categorical column, generating separate results for each segment.

# Validation with segmentation

segmented_data = pl.DataFrame({

"id": range(1, 10),

"value": [10, 20, 3, 40, 50, 2, 6, 8, 60],

"region": ["North", "North", "South", "South", "East", "East", "West", "West", "West"]

})

segmented_validation = (

pb.Validate(data=segmented_data, tbl_name="regional_data")

.col_vals_gt(

columns="value",

value=10,

segments="region"

)

.interrogate()

)

segmented_validationThe assert_passing() and assert_below_threshold() methods were added for users integrating validation into test suites. They provide clean interfaces for asserting that validations pass, with informative error messages when they do not. The above_threshold() method complements these by returning a boolean indicating whether any steps exceeded a specified threshold level.

Pipeline integration has been a recurring theme. The write_file() and read_file() functions allow validation objects to be serialized to disk and restored later, which is useful for archiving results or passing validations between pipeline stages.

Looking Forward

Fifty releases represents a considerable milestone, and one that furnishes a solid foundation for continued endeavours. The architecture that supports multiple data sources, multiple interfaces, and multiple languages provides a stable base for adding new capabilities. Equally important is the investment in testing: Pointblank has many thousands of tests with high code coverage, and this rigor is deliberate. A data quality library must hold itself to the highest standards of correctness, because users who rely on such a tool to find problems in their data should not also have to worry about problems in the tool itself. The contributors who have participated in this project bring diverse perspectives and concrete improvements. Their involvement has made the library stronger in manifold ways that a single developer could not anticipate.

If you are interested in the design philosophy behind Pointblank, I gave a talk called Making Things Nice in Python that covers how Pointblank and Great Tables prioritize user experience in Python package design. The talk explores why convenient options, extensive documentation, and thoughtful API decisions matter for everyone, even when they challenge conventional Python patterns and practices.

The steadfast commitment to data quality as a discipline remains unchanged. I really do believe that organizations succeed when they can trust their data, and that trust is built through systematic validation and clear communication of results. Pointblank aims to make that validation and communication as straightforward as possible, so that data quality becomes a routine part of every data workflow rather than an afterthought.

Thank you to everyone who has used Pointblank, reported issues, contributed code, or simply provided feedback. The journey from the initial release to the fiftieth has been made richer by your participation. If you have thoughts on the library, whether feature ideas, questions, bug reports, or just general observations, please share them on GitHub Discussions or GitHub Issues. Any sort of feedback is welcome and helps shape the direction of future development. We look forward to the next fifty releases and the continued advancement of data quality tooling in Python.