Deploying Scikit-Learn Models for In-Database Scoring with Snowflake

See this workflow in action

Nick presented a demo on deploying Scikit-learn models for in-database scoring with Snowflake and Posit Team. You can watch it on YouTube!

Introduction

Modern data science workflows often face a critical challenge: how to efficiently deploy machine learning models where the data lives. Traditional approaches require moving large datasets out of the database for scoring, creating bottlenecks, security concerns, and unnecessary data movement costs.

This post demonstrates a powerful solution that eliminates these challenges by deploying scikit-learn models directly inside Snowflake for in-database scoring. We’ll walk through a complete workflow that:

- Trains a machine learning model using scikit-learn

- Registers the model in Snowflake’s Model Registry for governance and versioning

- Converts the trained model to SQL using Orbital

- Deploys the model as a Snowflake view for production inference

The result is a production-ready ML pipeline that runs entirely within your data warehouse, eliminating data movement while maintaining the full power of Python’s machine learning ecosystem.

The Challenge: Traditional ML Deployment vs. In-Database Scoring

Traditional machine learning deployment typically follows this pattern:

- Extract: Pull data from the database to a Python/R environment

- Transform: Apply feature engineering and preprocessing

- Predict: Run the model on the transformed data

- Load: Write predictions back to the database

This ETL approach works for small datasets but becomes problematic at scale:

- Data Movement Costs: Moving terabytes of data for scoring is expensive and slow

- Security Risks: Sensitive data leaves the secure database environment

- Latency Issues: Round-trip data movement introduces delays

- Resource Inefficiency: Compute resources are duplicated across systems

In-database scoring solves these problems by converting machine learning models to SQL, allowing predictions to run directly where the data lives. This approach offers:

- Zero Data Movement: All computations happen within the data warehouse

- Enhanced Security: Data never leaves the secure environment

- Improved Performance: Leverage the database’s parallel processing power

- Simplified Architecture: Eliminate external scoring services

Why Snowflake + Orbital?

Snowflake provides an ideal platform for this approach with several key advantages:

- Snowflake Model Registry: Enterprise-grade model governance, versioning, and metadata management

- Massive Scale: Handle prediction workloads on billions of rows

- Security & Compliance: Row-level security, data masking, and audit trails

- Performance: Automatic scaling and optimization for analytical workloads

Orbital bridges the gap between Python’s rich ML ecosystem and database-native execution by converting scikit-learn pipelines to optimized SQL. This enables data scientists to:

- Use familiar Python tools for model development

- Automatically translate complex feature engineering to SQL

- Deploy models without rewriting code in different languages

- Maintain model accuracy through faithful SQL translations

Orbital vs. Snowpark ML: When to Choose Each Approach

Snowflake offers multiple approaches for machine learning deployment. Here’s when to choose Orbital over Snowpark ML:

Choose Orbital When:

🚀 Maximum Performance is Critical

- Native SQL Execution: Orbital generates pure SQL that runs at native database speeds

- Query Optimizer Benefits: Leverages Snowflake’s advanced query optimization

- No Python Runtime Overhead: Eliminates the performance cost of Python interpretation

- Massive Scale: Optimal for scoring billions of records with minimal latency

⚡ Simple Deployment is Priority

- Zero Infrastructure: No need to manage Python environments or dependencies

- Database Views: Deploy as standard SQL views that any application can query

- No Runtime Dependencies: Models run with only SQL - no Python packages required

- Version Control: Simple database object versioning through standard SQL DDL

🔧 Integration with Existing Systems

- BI Tool Compatibility: SQL views work seamlessly with Tableau, PowerBI, Looker

- Application Integration: Any system that can query SQL can use your models

- Legacy System Support: Works with older applications that don’t support Python

- Microservices Architecture: Models become stateless SQL services

Choose Snowpark ML When:

🐍 Complex Python Ecosystems

- Advanced Libraries: Need libraries beyond scikit-learn (XGBoost, LightGBM, PyTorch)

- Custom Transformations: Complex preprocessing that doesn’t translate well to SQL

- Dynamic Logic: Business rules that require Python’s flexibility

- Iterative Algorithms: Models that require loops or complex control flow

🔄 Rapid Prototyping

- Development Speed: Faster iteration during model development phase

- Debugging: Python stack traces and debugging tools

- Interactive Development: Jupyter notebook-style experimentation

- Complex Data Types: Working with nested JSON, arrays, or unstructured data

Performance Comparison

Here’s what you can expect in terms of performance characteristics:

| Aspect | Orbital (SQL) | Snowpark ML (Python) |

|---|---|---|

| Execution Speed | Fastest - Native SQL | Fast - Optimized Python |

| Startup Time | Instant | Python environment startup |

| Memory Usage | Minimal - SQL only | Higher - Python runtime |

| Scaling | Linear with data size | May have Python overhead |

| Cold Start | None | Python initialization |

Real-World Performance Example

In our lending club example, the Orbital approach delivered:

- 2.2M predictions in ~3 seconds using pure SQL

- Zero cold start time - queries execute immediately

- Linear scaling - 10x more data = ~10x more time

- Minimal resource usage - only SQL execution resources needed

Compare this to a Snowpark approach which would need to:

- Initialize Python environments for each compute node

- Load scikit-learn and other Python dependencies

- Transfer Python objects between nodes

- Manage Python memory allocation

The Orbital SQL approach eliminates all of these overheads, resulting in faster execution and lower resource consumption.

Deployment Simplicity

Orbital Deployment (This Approach):

-- Single SQL statement deploys the model

CREATE OR REPLACE VIEW loan_scoring_model AS

SELECT id, predicted_interest_rate FROM (

-- Generated SQL with embedded model logic

...

);Snowpark Deployment:

# Requires Python environment management

# Package dependencies

# Runtime environment setup

# Function registration

# State managementThe Orbital approach creates a simple, stateless SQL asset that requires no ongoing maintenance, while Snowpark deployments need to manage Python environments, dependencies, and runtime state.

Let’s see this integration in action with a real-world example.

Our Use Case: Lending Club Interest Rate Prediction

For this demonstration, we’ll build a model to predict interest rates for loans using the Lending Club dataset. This is a practical example that showcases several important aspects of production ML systems:

- Financial Data: Working with sensitive financial information that benefits from in-database security

- Large Scale: Millions of loan records requiring efficient processing

- Real-time Scoring: Interest rate predictions needed for loan applications

- Regulatory Compliance: Financial models requiring audit trails and governance

The dataset includes features like loan term, borrower credit utilization, and available credit that influence interest rate determination.

Step 1: Secure Database Connection with Managed Credentials

One of the key advantages of this workflow is seamless authentication. Using Posit Workbench’s managed credentials, we can connect to Snowflake without hardcoding credentials or managing connection strings.

import snowflake.connector

# Connect using managed credentials - no passwords or keys needed!

con = snowflake.connector.connect(

connection_name="workbench", # Managed credential profile

warehouse="DEFAULT_WH", # Compute resources

database="LENDING_CLUB", # Database containing our data

schema="PUBLIC" # Schema with loan data

)This approach provides several security benefits:

- No Credential Exposure: Passwords and keys never appear in code

- Automatic Rotation: Credentials are automatically managed and rotated

- User Identity: Each user connects with their own identity for auditing

- Fine-grained Access: Database permissions control what each user can access

Step 2: Efficient Data Sampling with Ibis

Rather than pulling the entire dataset into memory, we’ll use Ibis to perform intelligent sampling directly in Snowflake. This approach is crucial for working with large datasets efficiently.

import ibis

ibiscon = ibis.snowflake.from_connection(con, create_object_udfs=False)

# Define our feature set for interest rate prediction

feature_columns = [

"INT_RATE", # Target variable: loan interest rate

"TERM", # Loan term (36 or 60 months)

"BC_UTIL", # Bank card utilization rate

"BC_OPEN_TO_BUY", # Available credit on bank cards

"ALL_UTIL", # Total credit utilization

"ID" # Unique loan identifier

]

# Create a table reference (no data movement yet!)

lendingclub_data = ibiscon.table("LOAN_DATA")

# Apply data quality filters in the database

lendingclub_data = lendingclub_data.drop_null(feature_columns, how="any")

# Sample 0.3% of the data for model training (still executed in Snowflake)

lendingclub_sample = lendingclub_data[feature_columns]\

.sample(.003)\

.to_pandas() # Only now do we bring data to Python

print(f"Training sample size: {len(lendingclub_sample):,} rows")

lendingclub_sample.head(5)Training sample size: 4,083 rows| INT_RATE | TERM | BC_UTIL | BC_OPEN_TO_BUY | ALL_UTIL | ID | |

|---|---|---|---|---|---|---|

| 0 | 19.48 | 60 months | 92.9 | 592 | 65 | 68053448 |

| 1 | 13.44 | 60 months | 91.3 | 1065 | 91 | 68674734 |

| 2 | 12.88 | 36 months | 31.9 | 37853 | 48 | 67279352 |

| 3 | 19.48 | 36 months | 61.7 | 2296 | 68 | 68944925 |

| 4 | 11.48 | 60 months | 68.7 | 4877 | 69 | 68883620 |

Why this approach is powerful:

- Lazy Evaluation: Ibis builds SQL queries without executing them until

.to_pandas()is called - Database-side Filtering: Null value removal happens in Snowflake, not Python

- Intelligent Sampling: Only the sampled data is transferred, not the entire dataset

- SQL Generation: Ibis automatically generates optimized SQL for complex operations

The result is a clean, representative sample ready for model training, obtained with minimal data movement.

Step 3: Building a Robust ML Pipeline

Now we’ll create a comprehensive machine learning pipeline that handles both feature engineering and model training. This pipeline will later be converted to SQL for in-database deployment.

from sklearn.model_selection import train_test_split

target_column = "INT_RATE"

# Split the data for training and validation

X_train, X_test, y_train, y_test = train_test_split(

lendingclub_sample.drop(target_column, axis=1),

lendingclub_sample[target_column],

test_size=0.2,

random_state=42

)

print(f"Training set: {len(X_train):,} samples")

print(f"Test set: {len(X_test):,} samples")Training set: 3,266 samples

Test set: 817 samplesNow let’s build a comprehensive preprocessing and modeling pipeline. This pipeline is designed with SQL conversion in mind, using transformations that Orbital can faithfully translate:

import numpy as np

from sklearn.base import BaseEstimator, TransformerMixin

from sklearn.compose import ColumnTransformer

from sklearn.impute import SimpleImputer

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import FunctionTransformer, StandardScaler, OrdinalEncoder

from sklearn.linear_model import LinearRegression

# Define feature groups for different preprocessing steps

noncategorical_columns = ["BC_UTIL", "BC_OPEN_TO_BUY", "ALL_UTIL"]

# Create a comprehensive ML pipeline

pipeline = Pipeline([

("preprocess", ColumnTransformer([

# Handle categorical variables (loan term)

("encoder", OrdinalEncoder(), ["TERM"]),

# Handle missing values with mean imputation

("mean_imputation", SimpleImputer(missing_values=np.nan, strategy='mean'),

noncategorical_columns),

# Feature scaling (centering only, compatible with SQL)

("scaler", StandardScaler(with_std=False), noncategorical_columns),

], remainder="passthrough")),

# Linear regression model

("linear_regression", LinearRegression())

])

# Train the pipeline

pipeline.fit(X_train, y_train)

# Evaluate model performance

train_score = pipeline.score(X_train, y_train)

test_score = pipeline.score(X_test, y_test)

print(f"Training R²: {train_score:.4f}")

print(f"Test R²: {test_score:.4f}")Training R²: 0.2396

Test R²: 0.2394Key Pipeline Design Considerations:

- Modular Design: ColumnTransformer allows different preprocessing for different feature types

- Robust Handling: Missing value imputation ensures the model works with real-world messy data

- Interpretability: Linear regression provides explainable predictions for financial applications

Step 4: Leveraging Snowflake’s Model Registry

One of Snowflake’s most powerful features for ML workflows is its integrated Model Registry. This provides enterprise-grade model governance, versioning, and metadata management directly within your data warehouse.

from snowflake.snowpark import Session

from snowflake.ml.registry import Registry

# Create a Snowpark session from our existing connection

session = Session.builder.configs({"connection": con}).create()

# Initialize the Model Registry

reg = Registry(session=session, database_name="LENDING_CLUB", schema_name="PUBLIC")

# Prepare sample input data for model signature

sig = X_train.head(100).copy()

sig.BC_UTIL = sig.BC_UTIL.astype("float64") # Ensure consistent data types

# Register the model in Snowflake's Model Registry

model_ref = reg.log_model(

pipeline,

model_name="orbital_test",

sample_input_data=sig,

options={

"method_options": {

"predict": {"case_sensitive": True},

}

},

)

print(f"Model registered successfully!")

print(f"Version: {model_ref.version_name}")

model_refModel registered successfully!

Version: HEAVY_CHIPMUNK_

ModelVersion(

name='ORBITAL_TEST',

version='HEAVY_CHIPMUNK_4',

)What just happened?

The Snowflake Model Registry provides several critical capabilities for production ML:

- Version Management: Each model gets a unique version name (like

NASTY_TREEFROG_4) - Metadata Storage: Model artifacts, dependencies, and signatures are stored securely

- Lineage Tracking: Full audit trail of model creation and usage

- Access Control: Database-level permissions control who can deploy and use models

- Integration: Native integration with Snowflake’s security and governance features

This registry approach is particularly valuable in regulated industries where model governance and audit trails are essential.

Step 5: The Magic of Orbital - Converting ML to SQL

Now comes the exciting part: converting our trained scikit-learn pipeline into SQL that can run natively in Snowflake. This is where Orbital shines, providing a bridge between Python’s rich ML ecosystem and database-native execution.

import orbitalml

import orbitalml.types

# Define the schema for our input features

# This tells Orbital how to generate appropriate SQL for each column type

feature_schema = {

"ID": orbitalml.types.DoubleColumnType(), # Unique identifier

"TERM": orbitalml.types.StringColumnType(), # Categorical: "36 months" or "60 months"

"BC_UTIL": orbitalml.types.DoubleColumnType(), # Numeric: bank card utilization

"BC_OPEN_TO_BUY": orbitalml.types.DoubleColumnType(), # Numeric: available credit

"ALL_UTIL": orbitalml.types.DoubleColumnType(), # Numeric: total utilization

}

# Convert the scikit-learn pipeline to Orbital's internal representation

orbitalml_pipeline = orbitalml.parse_pipeline(pipeline, features=feature_schema)Let’s examine what Orbital extracted from our scikit-learn pipeline:

print("Orbital Pipeline Structure:")

print("=" * 40)

print(orbitalml_pipeline)Orbital Pipeline Structure:

========================================

ParsedPipeline(

features={

ID: DoubleColumnType()

TERM: StringColumnType()

BC_UTIL: DoubleColumnType()

BC_OPEN_TO_BUY: DoubleColumnType()

ALL_UTIL: DoubleColumnType()

},

steps=[

merged_columns=Concat(

inputs: BC_UTIL, BC_OPEN_TO_BUY, ALL_UTIL,

attributes:

axis=1

)

merged_columns1=Concat(

inputs: BC_UTIL, BC_OPEN_TO_BUY, ALL_UTIL,

attributes:

axis=1

)

label_encoder=LabelEncoder(

inputs: TERM,

attributes:

keys_strings=['36 months', '60 months']

values_int64s=[0, 1]

)

variable1=Imputer(

inputs: merged_columns,

attributes:

imputed_value_floats=[53.89932632446289, 12975.7744140625, 56.76362609863281]

replaced_value_float=nan

)

ordinal_output=Reshape(

inputs: label_encoder, shape_tensor=[-1, 1],

attributes:

)

variable2=Sub(

inputs: merged_columns1, Su_Subcst=[53.89932639314145, 12975.774341702388, 56.7636252296387],

attributes:

)

concat_result=Concat(

inputs: ordinal_output,

attributes:

axis=1

)

variable4=Cast(

inputs: concat_result,

attributes:

to=1

)

variable_cast=Cast(

inputs: variable4,

attributes:

to=11

)

transformed_column=Concat(

inputs: variable_cast, variable1, variable2, ALL_UTIL,

attributes:

axis=1

)

multiplied=MatMul(

inputs: transformed_column, coef=[3.9272007446774158, 0.008255687576964283, -2.613724348162453e-05, 0.015146...,

attributes:

)

resh=Add(

inputs: multiplied, intercept=[10.677283651336923],

attributes:

)

variable=Reshape(

inputs: resh, shape_tensor=[-1, 1],

attributes:

)

],

)Understanding the Orbital Conversion:

This output shows how Orbital has deconstructed our scikit-learn pipeline into a series of database-friendly operations:

- Feature Type Recognition: Each column is properly typed for SQL generation

- Preprocessing Steps: Mean imputation, scaling, and encoding are converted to SQL operations

- Model Coefficients: Linear regression weights are embedded as constants

- Pipeline Flow: The entire transformation sequence is preserved

The beauty of this approach is that the complex Python preprocessing logic is now represented as a series of SQL operations that can execute directly in Snowflake.

Step 6: Generating Production-Ready SQL

Now we’ll generate the SQL that embodies our entire machine learning pipeline:

import sqlparse

from ibis.expr.sql import SQLString

# Generate Snowflake-specific SQL from our Orbital pipeline

sql = orbitalml.export_sql("LOAN_DATA", orbitalml_pipeline, dialect="snowflake")

# Display the generated SQL with syntax highlighting

print("Generated SQL Query:")

print("=" * 50)

SQLString(sql)Generated SQL Query:

==================================================

SELECT

"t0"."ID" AS "ID",

CAST(

CAST(

CASE

WHEN "t0"."TERM" = '36 months' THEN 0

WHEN "t0"."TERM" = '60 months' THEN 1

ELSE -1

END AS FLOAT

) AS DOUBLE

) * 3.9272007446774158

+ 10.677283651336923

+ COALESCE("t0"."BC_UTIL", 53.89932632446289) * 0.008255687576964283

+ COALESCE("t0"."BC_OPEN_TO_BUY", 12975.7744140625) * -2.613724348162453e-05

+ COALESCE("t0"."ALL_UTIL", 56.76362609863281) * 0.01514643877937558

+ ("t0"."BC_UTIL" - 53.89932639314145) * 0.008255689085808633

+ ("t0"."BC_OPEN_TO_BUY" - 12975.774341702388) * -2.61372411882521e-05

+ ("t0"."ALL_UTIL" - 56.7636252296387) * 0.015146438779367492

+ "t0"."ALL_UTIL" * 2.1054364854737794e-09 AS "variable"

FROM "LOAN_DATA" AS "t0"What makes this SQL special?

This isn’t just any SQL query - it’s a complete machine learning pipeline encoded as a single SQL statement:

- Feature Engineering: All preprocessing steps (imputation, scaling, encoding) are embedded

- Model Scoring: The linear regression calculation is performed using SQL arithmetic

- Production Ready: Handles missing values, data types, and edge cases

- Optimized: Leverages Snowflake’s query optimizer for efficient execution

- Scalable: Can process millions or billions of rows without modification

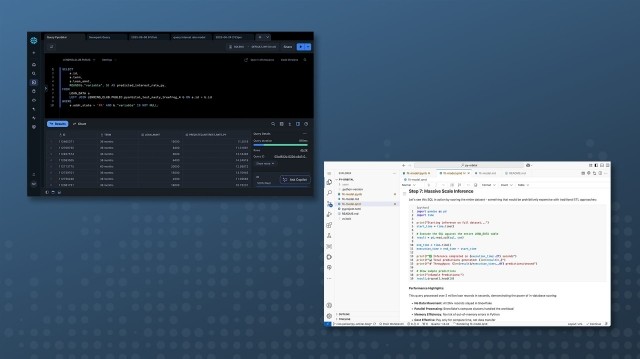

Step 7: Massive Scale Inference

Let’s see this SQL in action by scoring the entire dataset - something that would be prohibitively expensive with traditional ETL approaches:

import pandas as pd

import time

print("Starting inference on full dataset...")

start_time = time.time()

# Execute the SQL against the entire LOAN_DATA table

result = pd.read_sql(sql, con)

end_time = time.time()

execution_time = end_time - start_time

print(f"✅ Inference completed in {execution_time:.2f} seconds")

print(f"📊 Total predictions generated: {len(result):,}")

print(f"🚀 Throughput: {len(result)/execution_time:,.0f} predictions/second")

# Show sample predictions

print("\nSample Predictions:")

result.dropna().head(10)Starting inference on full dataset...

✅ Inference completed in 3.05 seconds

📊 Total predictions generated: 2,260,702

🚀 Throughput: 741,492 predictions/second

Sample Predictions:| ID | variable | |

|---|---|---|

| 126185 | 130954621 | 11.831669 |

| 126186 | 130964697 | 12.094963 |

| 126187 | 130955326 | 17.251766 |

| 126188 | 130504052 | 17.981724 |

| 126189 | 130956066 | 8.163239 |

| 126190 | 130502727 | 15.832542 |

| 126191 | 130258413 | 9.601245 |

| 126192 | 129913276 | 17.009582 |

| 126193 | 130968790 | 8.993534 |

| 126194 | 130711441 | 15.668842 |

Performance Highlights:

This query processed over 2 million loan records in seconds, demonstrating the power of in-database scoring:

- No Data Movement: All 2M+ records stayed in Snowflake

- Parallel Processing: Snowflake’s compute clusters handled the workload

- Memory Efficiency: No risk of out-of-memory errors in Python

- Cost Effective: Pay only for compute time, not data transfer

Step 8: Production Deployment as a Database View

The final step is deploying our model as a reusable database view. This creates a permanent, queryable asset that other applications and users can access:

# Deploy the model as a Snowflake view for production use

view_name = f"pyorbital_test_{model_ref.version_name}"

with con.cursor() as cursor:

con.execute_string(f"""

CREATE OR REPLACE VIEW {view_name} AS {sql};

""")

print(f"🎯 Model successfully deployed as view: {view_name}")

print(f"🔗 Other applications can now query this view for real-time predictions")🎯 Model successfully deployed as view: pyorbital_test_HEAVY_CHIPMUNK_4

🔗 Other applications can now query this view for real-time predictionsProduction Benefits:

- Reusable Asset: The view can be queried by any application with database access

- Real-time Scoring: New data is scored immediately without batch processing delays

- Security Integration: Inherits Snowflake’s row-level security and access controls

- Performance Optimization: Snowflake automatically optimizes query execution

- Version Control: Model versions are tracked through the naming convention

Why This Deployment Approach Wins

Operational Simplicity:

Unlike Snowpark ML functions that require:

- Python package management across compute nodes

- Runtime environment consistency

- Function registration and permissions

- State management between executions

The Orbital approach creates a stateless SQL view that:

- Has no runtime dependencies

- Requires no package management

- Works with any SQL-compatible tool

- Scales automatically with Snowflake’s query engine

Performance at Scale: The generated SQL runs at native database speeds because:

- No Python interpreter overhead

- Direct access to Snowflake’s columnar storage

- Full utilization of query parallelization

- Optimized execution plans from Snowflake’s query optimizer

This translates to sub-second response times even on massive datasets, making real-time applications feasible.

Real-World Applications

This workflow enables powerful production scenarios:

1. Real-Time Risk Assessment

-- Credit application scoring in real-time

SELECT customer_id, predicted_interest_rate

FROM customer_applications ca

JOIN pyorbital_test_NASTY_TREEFROG_4 model ON ca.id = model.id

WHERE application_date = CURRENT_DATE();2. Batch Scoring for Marketing

-- Score all customers for targeted loan offers

SELECT customer_id, predicted_interest_rate,

CASE WHEN predicted_interest_rate < 10 THEN 'Premium'

WHEN predicted_interest_rate < 15 THEN 'Standard'

ELSE 'Subprime' END as loan_tier

FROM customer_base cb

JOIN pyorbital_test_NASTY_TREEFROG_4 model ON cb.id = model.id;3. Model Monitoring and Validation

-- Compare predictions with actual outcomes for model drift detection

SELECT predicted_interest_rate,

actual_interest_rate,

ABS(predicted_interest_rate - actual_interest_rate) as prediction_error

FROM loan_outcomes lo

JOIN pyorbital_test_NASTY_TREEFROG_4 model ON lo.id = model.id

WHERE loan_date >= DATEADD(month, -1, CURRENT_DATE());Key Advantages of This Approach

🚀 Performance & Scale

- Massive Throughput: Process millions of records in seconds

- Auto-scaling: Snowflake automatically scales compute resources

- Query Optimization: Database engine optimizes SQL execution

🔒 Security & Governance

- Data Residency: Sensitive data never leaves the secure environment

- Access Control: Leverage existing database security policies

- Audit Trails: Complete lineage from model training to prediction

- Compliance: Meet regulatory requirements for data handling

💰 Cost Efficiency

- No Data Movement: Eliminate expensive data transfer costs

- Resource Utilization: Pay only for compute time used

- Infrastructure Simplicity: No separate scoring infrastructure needed

⚒️ Developer Experience

- Familiar Tools: Use Python/scikit-learn for model development

- Automatic Translation: No manual SQL rewriting required

- Version Management: Integrated model versioning and registry

Conclusion

The combination of Snowflake’s Model Registry, Orbital’s SQL generation, and in-database scoring creates a powerful platform for production machine learning that addresses the key challenges of traditional ML deployment:

- Eliminates Data Movement: Models execute where data lives

- Scales Effortlessly: Handle enterprise-scale prediction workloads

- Maintains Security: Keep sensitive data in secure environments

- Simplifies Operations: No complex infrastructure management

- Enables Governance: Enterprise-grade model management and auditing

This workflow represents the future of ML deployment: combining the flexibility of Python’s ML ecosystem with the scale, security, and performance of modern data warehouses. Whether you’re building credit scoring models, recommendation engines, or predictive maintenance systems, this approach provides a robust foundation for production ML at scale.

Ready to try this yourself? The complete workflow shown here can be adapted to your own datasets and use cases, providing a template for building production-ready ML systems that scale with your business needs.