Databases with Posit

Questions?

Visit the companion discussion topic on Posit Community to post questions or thoughts on this blog post.

Introduction

You likely learned to analyze data using files stored locally on your laptop, but in the enterprise, data is increasingly stored in relational databases. This article will teach you essential tools for working with relational databases, focusing on three examples: DuckDB, Snowflake, and Databricks. For each database type, we’ll discuss the recommended workflow for both R and Python.

If you’re used to working with data frames, you might think of a relational database as a collection of data frames. In database terms, these are called tables instead of data frames, but the idea is similar.

Below is a summary table of our recommended approach for each database and language.

| Language | Database | Recommended Environment | Connection method | Manipulation method |

|---|---|---|---|---|

| R | DuckDB | Any | DBI and duckdb | duckplyr |

| Python | DuckDB | Any | Ibis | Ibis |

| R | Snowflake | Snowflake Native Posit Workbench App | DBI and odbc | dbplyr |

| Python | Snowflake | Snowflake Native Posit Workbench App | Ibis | Ibis |

| R | Databricks | Posit Workbench Databricks Integration | sparklyr or DBI and odbc | sparklyr or dbplyr |

| Python | Databricks | Posit Workbench Databricks Integration | Ibis, Databricks Connect, and Databricks SDK | Ibis |

Before we dive into the details, let’s define some useful terms that we’ll use throughout the article.

Posit Workbench

Posit Workbench is a data science platform that hosts browser-based IDEs in a remote server environment. You can use RStudio Pro, VS Code, Jupyter Notebook, or JupyterLab on Workbench.

As you’ll see later on, there are Workbench integrations for Databricks and Snowflake. These integrations make it easy to work with Snowflake and Databricks data from within Posit tools.

Database management system (DBMS)

Database management systems, or DBMSs, run databases. DuckDB, Snowflake, and Databricks are all examples of DBMSs.

ODBC

ODBC, or Open Database Connectivity, is a standard interface to different database management systems. ODBC allows you to use the same code to connect to different database management systems. You just need to switch out the driver, which translates ODBC commands into whatever type of commands your database understands.

Do I need to write SQL?

At some point, you’ll likely need to use some form of SQL to interact with relational databases. However, there are various R and Python packages that provide familiar interfaces to databases, potentially eliminating the need to write raw SQL. For example, with the R package dbplyr, you manipulate database tables using familiar dplyr syntax. Similarly, Ibis for Python provides a common interface to various databases and other backends.

Air quality data

To demonstrate how to connect to these databases and manipulate the data therein, we recreated the same database in DuckDB, Snowflake, and Databricks. AIR_QUALITY is a database with four tables: CARBON_MONOXIDE, NITROGEN_DIOXIDE, OZONE, and SULFUR_DIOXIDE. Each table contains historical data on the levels of the pollutant in the United States. The data is from the EPA.

Common patterns

In the following sections, we’ll cover our recommended approaches for working with DuckDB, Snowflake, and Databricks in R and Python. You’ll notice some common patterns.

The R code for connecting to databases and working with tables looks very similar across different DBMSs. There are R packages that can connect to a variety of databases, as well as ways of manipulating database tables that all use the popular dplyr interface. While we’ll technically use different R packages to manipulate DuckDB, Snowflake, and Databricks tables (duckplyr, dbplyr, and sparklyr, respectively), each uses the dplyr interface and then translates that dplyr code into the correct version of SQL.

All the code will generally follow this pattern:

- Connect to the database. You can use the DBI package, along with a helper function that supplies a driver, to connect to DuckDB, Snowflake, and Databricks. We’ll show you the exact code in each corresponding section below.

con <-

DBI::dbConnect(

drv = a_specific_database_driver(),

"other connection details"

)- Create a reference to a table. Along with the connection created in Step 1, use the dplyr function

tbl()to create a reference to a table in your database.

my_table <- dplyr::tbl(con, "my_table_name")- Create queries by writing R code. Use an R package (dbplyr, sparklyr, or duckplyr) to manipulate your table by writing R code that is automatically translated into SQL and run on DuckDB, Snowflake, or Databricks. This eliminates the need to write raw SQL.

# Looks like normal dplyr, but is translated into SQL under-the-hood

my_table |>

select(col_1, col_2) - Use

collect()to execute a query and pull data into R. dbplyr, sparklyr, and duckplyr commands are all lazily evaluated, which means that the query is never run on the database unless explicitly requested. You can trigger R to execute a query and return the entire result as a tibble withdplyr::collect(). To learn more, see Generating queries.

my_table_df <-

my_table |>

select(col_1, col_2) |>

collect()On the Python side, we’ll use Ibis to connect to DuckDB, Snowflake, and Databricks and manipulate tables. Ibis is an open source dataframe library that works with a wide variety of backends, including DuckDB, Snowflake, and Databricks.

The code will look very similar for each DBMS, and also looks fairly similar to the R code shown in the other tab.

- Connect to the database. Use

ibis.connect()to connect to the database. As you’ll see later, you’ll need a different Ibis backend depending on the database type. However, the code will look similar to the following:

import ibis

con = ibis.connect(database_details, other_database_details)- Create a table expression. Using the connection created in Step 1, use the Ibis function

table()to create an IbisTablethat we’ll be able to manipulate and analyze.

my_table = con.table("my_table_name")- Create queries by writing Ibis code. Use Ibis to explore or manipulate the table. To learn more about manipulating data with Ibis, see Getting started.

my_table.filter(my_table.col_1 == 1)- Execute a query. Ibis lazily evaluates commands, which means that the full query is never run on the database unless explicitly requested. Call

.to_pandas()to force Ibis to evaluate a query..to_pandas()returns the result as a pandasDataFrame.

my_table.filter(my_table.col_1 == 1).to_pandas()DuckDB

While both Snowflake and Databricks are paid products, DuckDB is a free and open-source solution. You might encounter data already in a DuckDB database, or use DuckDB to speed up queries on data stored elsewhere in a different format. We’ll cover how to connect to a DuckDB database that already exists, using both R and Python.

There’s no special Workbench integration for DuckDB, so the code shown below will work locally or on Workbench. Depending on how your DuckDB database is set up, however, you may need to specify additional credentials.

Connect

To use R to connect to DuckDB, you’ll need the DBI and duckdb packages. DBI connects R to database management systems. duckdb provides an R API to DuckDB.

Use the function duckdb::duckdb() and pass the name of the database to duckdb()’s dbdir argument. Then, use dplyr::tbl() to access a particular table, just as we’ve done for the other methods.

con <- DBI::dbConnect(duckdb::duckdb(dbdir = "air_quality.db"))

ozone <- dplyr::tbl(con, "ozone")

ozone |>

head()To connect in Python, we’ll need the Ibis library. To install Ibis and the necessary dependencies for the DuckDB backend, run:

pip install 'ibis-framework[duckdb]'Then, use ibis.duckdb.connect() and table() to connect to your DuckDB database and create a reference to a table.

import ibis

con = ibis.duckdb.connect("air_quality.db")

ozone = con.table("ozone")Manipulate

In R, use duckplyr to work with DuckDB tables. duckplyr is similar to dbplyr, allowing you to write normal dplyr code, which is then automatically translated into SQL.

library(duckplyr)

ozone |>

filter(year(date_local) == 2020)duckplyr translates the filter() call into SQL, then sends the SQL to the database and retrieves the result. If you want to see the underlying SQL code, call dplyr::show_query().

ozone |>

filter(year(date_local) == 2020) |>

dplyr::show_query()To save space, R will only return the first few rows of the result as a preview. To import the entire result into R, where it can be stored as a data frame, use collect().

ozone_2020 <-

ozone |>

filter(year(date_local) == 2020) |>

collect()In Python, you can continue using Ibis to manipulate DuckDB tables. Learn more about manipulating data with Ibis here.

ozone.filter(ozone.date_local.year() == 2020)Tip

If you’d like to preview the results of your queries, set ibis.options.interactive = True to turn on interactive mode.

Snowflake

Snowflake is a popular cloud-based data warehousing platform that allows you to store, manage, and analyze large amounts of data quickly and easily. There are various ways to use Snowflake data and run computations on Snowflake, including using Snowflake’s own notebooks or downloading drivers and connecting to Snowflake locally.

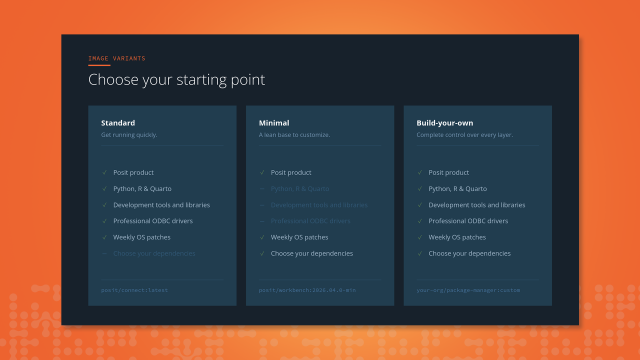

If you have a Posit Workbench license, we recommend using the Snowflake Native Posit Workbench App, accessible through Snowflake’s Marketplace.

The native app runs Posit Workbench from within Snowflake and handles your Snowflake credentials for you. This makes it easy to connect to and run code with Snowflake from within a familiar IDE. For more details about the native app, see Analyze Data with R using Posit Workbench and Snowflake.

Note

If you don’t have a Posit Workbench license, you can still access Snowflake data from within Posit tools, but you’ll need to set up your credentials yourself and download the Snowflake ODBC Driver.

In the next section, we’ll show you how to connect to Snowflake using the native app using R and Python.

Connect

The code to connect to Snowflake from the Snowflake Native Posit Workbench App looks similar to the code we used to connect to DuckDB. Just like with DuckDB, we’ll use DBI::dbConnect() to connect to Snowflake.

In the code below, we use the DBI function dbConnect() to connect to Snowflake. We specify the driver using the drv argument, and specify the Snowflake ODBC driver using the function odbc::snowflake(). The odbc package provides an interface to ODBC drivers.

library(DBI)

library(dbplyr)

conn <-

DBI::dbConnect(

drv = odbc::snowflake(),

warehouse = "DEFAULT_WH",

database = "AIR_QUALITY",

schema = "PUBLIC"

)conn is a connection object. We can use conn and the dplyr function tbl() to access specific tables in the AIR_QUALITY data.

ozone <- dplyr::tbl(conn, "OZONE")Note

We don’t need to manually provide any credentials to DBI::dbConnect() because they are handled by the native app.

In Python, we’ll use Ibis to connect to Snowflake and manipulate tables. First, install Ibis with the Snowflake backend:

pip install 'ibis-framework[snowflake]'Then, use ibis.snowflake.connect() to create a connection. You’ll need to specify a warehouse, database, and catalog.

Note

Snowflake calls collections of databases schemas, but Ibis calls them catalogs.

If you’re using the Posit Workbench Snowflake Native App, you’ll also need to specify connection_name="workbench". This allows ibis.snowflake.connect() to use the built-in credentials set up for you in the native app.

import ibis

con = ibis.snowflake.connect(

warehouse="DEFAULT_WH",

database="AIR_QUALITY",

catalog="PUBLIC",

connection_name="workbench"

)Note

If you’re not using the native app, you’ll need to specify a user, account, and password or other authentication method yourself. See https://ibis-project.org/backends/snowflake#connect for more details.

Then, use table() to create a table.

ozone = con.table("OZONE")Manipulate

Now that we have the ozone table, we can manipulate and explore it.

In R, we recommend using dbplyr to manipulate Snowflake tables. dbplyr is a database backend for dplyr, allowing you to write normal dplyr code, which is then translated into SQL.

Below, we use what looks like normal dplyr code to filter ozone to only include 2020 data.

library(dbplyr)

ozone |>

filter(year(date_local) == 2020)Again, you can use show_query() to see the underlying SQL query, and collect() to execute the entire query and pull the results into R.

In Python, continue using Ibis to manipulate the table. The code below is identical to the code we used to filter the table in the DuckDB section.

ozone.filter(ozone.date_local.year() == 2020)Databricks

Similar to Snowflake, you can use Databricks to store and perform computations on data. Databricks is built upon Apache Spark, an open-source analytics engine. This means you can use Spark to run analyses, conduct machine learning, etc., with your data. We’ll show you how to use R and Python interfaces to Spark.

If you have Posit Workbench, you can use the Databricks Integration to easily access Databricks data, run code on Databricks, and interact with other Databricks resources like MLflow models. If you don’t have a Posit Workbench license, you can still access Databricks data from within Posit tools. You’ll just need to manage your Databricks credentials yourself.

Note

In Databricks, a database is usually called a “schema”. For more information about how Databricks organizes data, see Database objects in Databricks.

Connect

To connect to Databricks on your own, you’ll need to create and start a Databricks cluster. If you’re using RStudio Pro and the Posit Workbench Databricks Integration, you can control clusters in the Databricks pane. After starting a cluster, you can find the cluster ID by expanding the cluster info. You’ll need the cluster ID when writing your connection code.

You can also control clusters in the Databricks UI under the Compute tab. The cluster ID is the portion of the URL that comes after /clusters/ and before ?.

Because Databricks is built upon Apache Spark, you can use the sparklyr package to connect. sparklyr is an R interface to Apache Spark.

To connect with sparklyr, use the function spark_connect() with method = "databricks_connect". You’ll need to supply your own cluster ID (see the tip below). If you’re using the Posit Workbench Databricks integration, the cluster ID is the only thing you’ll need to manually supply when making a connection to Databricks.

library(sparklyr)

con <- spark_connect(

cluster_id = "[Cluster ID]",

method = "databricks_connect"

)Note

If you want to use sparklyr, you’ll need to install both sparklyr and pysparklyr. For more information, see https://spark.posit.co/deployment/databricks-connect.html.

Alternatively, you can use DBI and odbc to connect to Databricks. You’ll need to supply dbConnect() with a httpPath. To find the httpPath, navigate to the cluster you’re using in the Databricks UI. Then, click on Advanced Options > JDBC/ODBC and copy the value under HTTP Path.

con <-

DBI::dbConnect(

odbc::databricks(),

httpPath = "[Value found under Advanced Options > JDBC/ODBC in the Databricks UI]"

)Regardless of connection method, create a table using dplyr::tbl(). You can use the dbplyr function in_catalog() to specify the catalog, schema, and table name.

ozone <- dplyr::tbl(con, in_catalog("demos", "air_quality", "ozone"))Because Databricks is built upon Apache Spark, we’ll use Ibis’s PySpark backend to connect to Databricks. We will also need Databricks Connect and the Databricks SDK for Python to connect to Databricks and create a SparkSession, which will allow us to program with Spark via Python.

pip install 'ibis-framework[pyspark]'

pip install databricks-connect

pip install databricks-sdkAfter installing the necessary libraries, use databricks.connect and databricks.core to create a SparkSession. Pass profile="workbench" to Config() if you’re using the Posit Workbench Databricks integration. This will allow Config() to use the built-in Databricks credentials. Supply your cluster ID to the cluster_id argument.

import ibis

from databricks.connect import DatabricksSession

from databricks.sdk.core import Config

config = Config(profile="workbench", cluster_id=[Cluster ID])

session = DatabricksSession.builder.sdkConfig(config).getOrCreate()Then, use ibis.pyspark.connect() to connect to the SparkSession with Ibis and table() to create a table. Specify the catalog, schema, and table in a string with the format "catalog.schema.table".

con = ibis.pyspark.connect(session=session)

ozone = con.table("demos.air_quality.ozone")Manipulate

In R, you can manipulate Databricks tables using dplyr functions, regardless of whether you connected with sparklyr or DBI/odbc. dbplyr or sparklyr will automatically translate dplyr commands to Spark SQL.

Again, for either method, you can use show_query() to see the underlying SQL query, and collect() to execute the entire query and pull the results into R.

library(sparklyr)

library(dplyr)

ozone <- tbl(sc, dbplyr::in_catalog("demos", "air_quality", "ozone"))

ozone |>

filter(year(date_local) == 2020)library(dbplyr)

ozone |>

filter(year(date_local) == 2020)Tip

How do you choose between a Spark or ODBC connection? We recommend using sparklyr to create a Spark connection if:

- You want to use Apache Spark.

- You need a running cluster for your work. If you don’t need a running cluster (e.g., just accessing data in a Shiny app), we recommend using an ODBC connection.

In Python, you can continue using Ibis to manipulate Databricks tables. This code is identical to the code we used for DuckDB and Snowflake.

ozone.filter(ozone.date_local.year() == 2020)Tip

Take a look at the Posit Solutions Databricks guide to learn more about best practices for working with Databricks.

Conclusion

We’ve now covered how to connect to and manipulate data from three different relational databases using similar tools. In R, you saw how to connect with DBI and odbc and then manipulate data with a dplyr interface. In Python, you saw how to connect and manipulate data with Ibis.

To learn more, see:

- Workbench-managed Databricks Credentials

- Analyze data with R using Posit Workbench and Snowflake

- The duckplyr, dbplyr, odbc, DBI, and sparklyr websites

- The Ibis website